WebRTC Stack

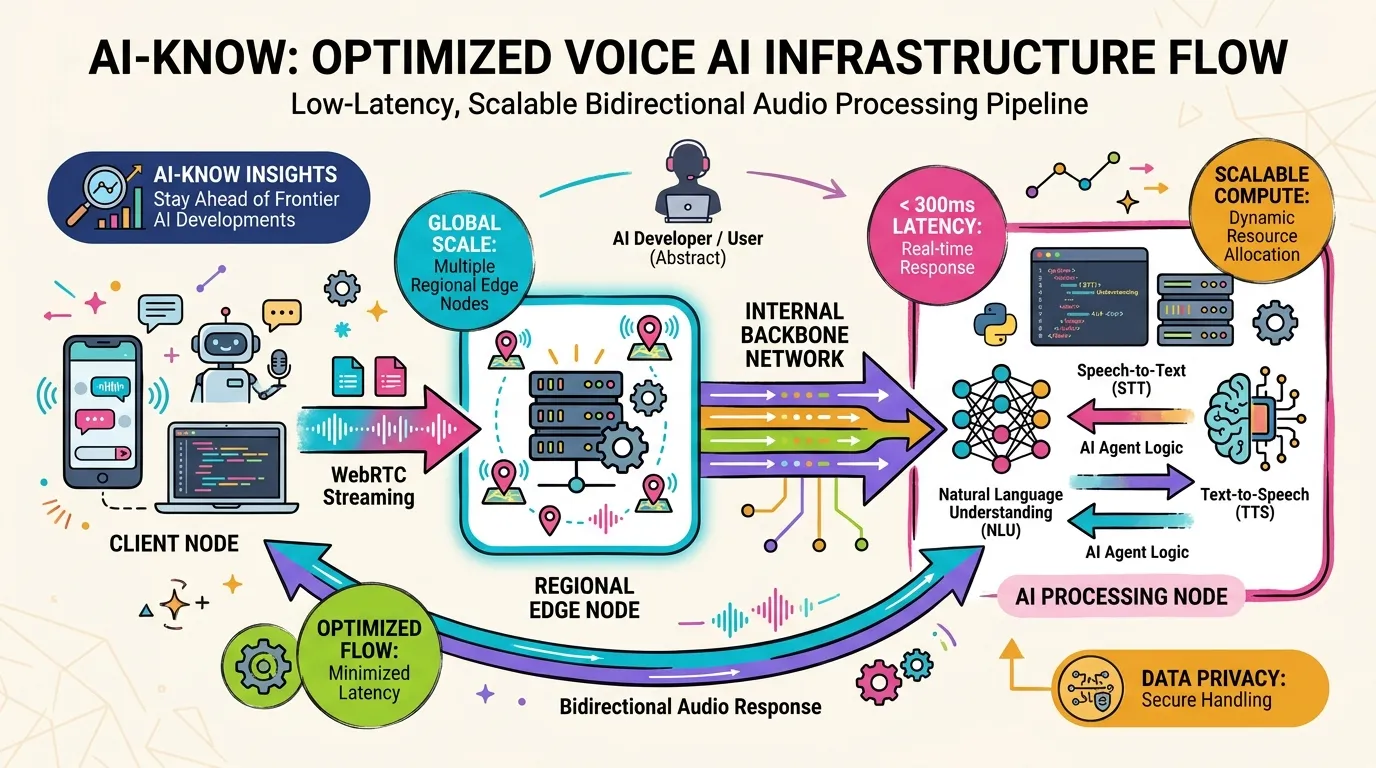

A WebRTC Stack refers to the full set of software components and infrastructure layers required to deliver real-time audio and video communication using the WebRTC protocol. It encompasses not just a WebRTC library, but the complete system: signaling servers, TURN/STUN servers, edge nodes, media gateways, codec selection logic, and packet loss recovery mechanisms — all of which jointly determine end-to-end audio quality and latency.

The widely used reference implementation is Google’s libwebrtc, which powers most browser-based WebRTC. However, for use cases requiring global-scale low latency, massive concurrent sessions, and tight optimization against an AI speech model’s input and output characteristics, from-scratch rebuilds have become viable. OpenAI’s full reconstruction of its WebRTC Stack for Realtime API is a prominent example.

Voice AI quality is ultimately a product of both LLM inference performance and the design quality of the underlying WebRTC Stack.